BERT was not designed to generate text at scale – its intrinsic purpose is to predict missing words in a sentence – however it has been widely applied to text summarization tasks to summarize last chunks of text to be used in titles and meta descriptions which are SEO friendly by default. This article will discuss how BERT can be used for automated text summarization to generate meta descriptions and the like and whether there is any benefit to generate meta description with bert over other automated text summarization techniques.

What is a meta description?

Before we dive in, let us get the jargon out of the way. If you’re completely new to the game you may be wondering what a meta description is. This is an HTML attribute or tag which provides a short description of the contents of a web page. This content of the attribute is frequently displayed in Google (or other search engine) search results right beneath the link the web page. Although the meta description tag can be any length Google tends to truncate it to around 155-160 characters.

Generating meta descriptions using BERT

BERT can be used to perform automated text summarization. This text can then be used as meta descriptions, titles, passages etc… depending on the length of text you choose to generate. Since the process by which text is summarized is an automated approach, as the term automated implies, then this can be performed at scale for every single webpage on your site.

A quick word on automated text summarization

There are two man approaches for automated text summarization: extractive and abstractive.

The extractive approach analyses each sentence within the text, by which each sentence is scored on its adequacy at summarizing the entire chunk of text. In the extractive approach, the summary contains popular sentences from the article. Extractive text summarization undertakes an unsupervised machine learning approach.

On the other hand, the abstractive approach generates new sentences that aim to retain the essence of the text found in the article. Abstractive text summarization is a deep learning approach, which means that the rules by which the algorithm creates sentences are generated by the algorithm itself and are therefore not easily deciphered.

One might say that these two approaches can also be used to describe the way we, as humans, summarize text, with some of us opting for a cut and paste approach of key sentences, whilst others generate new sentences from scratch that capture the gist of the content. Although both summarization techniques can produce promising results, the best approach for creating meta descriptions using BERT is the extractive approach. However many non-committal guides suggest trying both techniques and then deciding which process produces the best results.

Generate your own text summaries with BERT

Now that we have discussed the overall approaches which can be used to generate summaries of text, to be used as headers, meta descriptors or summaries, let us discuss the practicalities of carrying this out:

Using out-of-the-box state-of-the-art (SOTA) code for text summarization

If your aim is to simply generate text for your webpage you needn’t reinvent the wheel and out-of-the-box automated text summarization code should more than suffice. With such a strong online community, GitHub is an excellent place to search for such code. Github is an online platform where coders, and companies, from all backgrounds – ranging from novices to absolute wizards – share their code with the public. Most serious repositories contain README files with step-by-step guides on how to carry out this task. A basic level of coding knowledge (or thirst for knowledge!) is suggested for this task, and for the entire scope of this article in general. If you are very new to coding it is suggested to search for text summarization algorithms which make use of pretrained encoders.

The Bert Extractive Summarizer in Python

If you are a Python coder and looking for a simpler solution you may want to have a look at the Bert Extractive Summarizer which comes in the form of a very hand Python library. This nifty Python library has also been implemented as an online demo where you can test out chunks of code before biting the bullet and implementing this for yourself. The underlying algorithms covered by the code available in this Python library is discussed in depth in this paper and is also available as a GitHub repository.

Side note: If you have never coded in Python before (or in your life, for that matter) I strongly suggest installing Anaconda which is a Python distribution which allows you to write, debug, test and run programmes in the Python language. Once that is out of the way have a look at this tutorial on how to write a simple Python program/script.

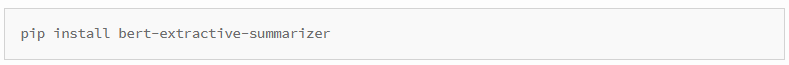

In order to write your own code with the Bert Extractive Summarizer library you must first install this library in your environment. Library handling can be a pain in the neck for larger more complex programs, however for this example we can simply install this library using the following in the Anaconda Prompt window. You can open this window by following these instructions.

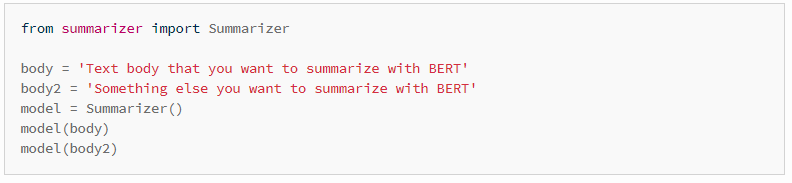

Once the library is installed, you may type out a simple script to perform automated text summarization. Here is a simple example template using this library:

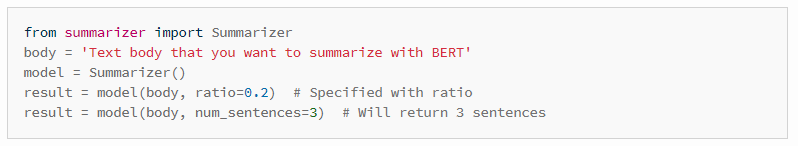

If you wish you may also specify the number of sentences to be returned by the model, as follows:

These examples have all been taken from the Bert Extractive Summarizer library, where you may find more complex examples and detailed documentation.

Let’s test this out ourselves

In order to test this out we must first choose a webpage as our guinea pig.

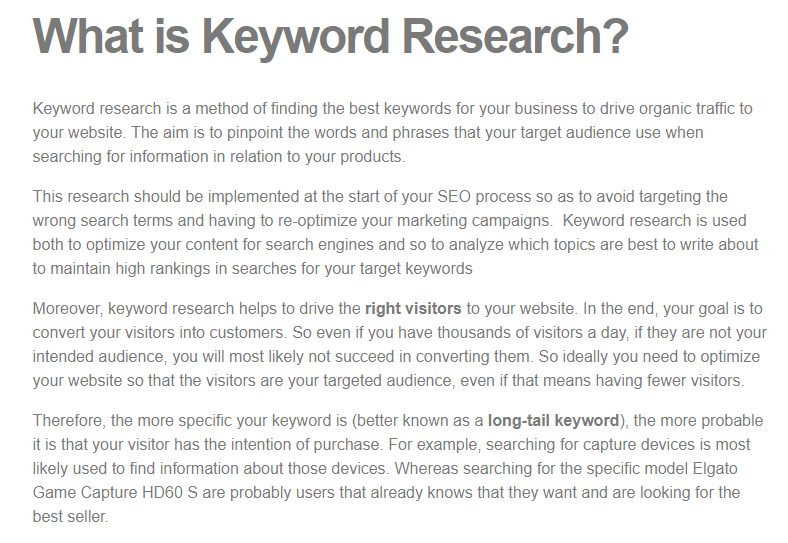

Experiment 1: For my first experiment I have decided to use a paragraph of text from one of our previous articles for this example to assess whether BERT comes up with an adequate meta description. The article is entitled “What’s the first step in the search engine optimisation process for your website?” and the paragraph is entitled “What is keyword research?”. Since Google search results only show around 155-160 characters of the meta description it would be suitable to generate two sentences as a summary of this text, however for this example I simply allowed the model to do its magic.

Here is the input text:

Here is the output text:

‘Keyword research is a method of finding the best keywords for your business to drive organic traffic to your website. The aim is to pinpoint the words and phrases that your target audience use when searching for information in relation to your products.’

In this case it is clear that the algorithm has simply used the first two sentences of the input text. Although these two sentences are perfectly adequate for summarizing the entire section of text, it is worth investigating further to check whether this is a simple coincidence or a cheeky trick.

Experiment 2: For my second experiment I chose another article entitled “How to make money on Tumblr”. Here is the extracted section I used:

Here is the output text:

‘In order to start making money on Tumblr, it’s important to understand why it became so successful. At its peak, Tumblr was the place to share photos, graphics and snippets of text.’

In both experiments the algorithm has done a very good job at text summarization and although the examples presented here are isolated and simple it is very easy to appreciate the potential of extractive automated text summarization. In both experiments the summative text generated could easily suffice as a meta description.

What is the benefit of using BERT over other automated text summarization techniques?

As one can expect there are many other automated text summarization techniques, and most are available as open-source repositories on GitHub and the like. If you are interested in learning more about some popular approaches on an algorithmic level, then here is a good review article on this subject. In the examples above we have seen how BERT ranks each sentence according to its adequacy at summarizing the section of text. Similarly, in passage indexing, BERT is used to rank passages on a webpage according to their adequacy in response to a use search query. The most adequate passages are then chosen as featured snippets and are often positioned above the top ranking webpages on a Google search results page. This is a very important point to consider when deciding on an approach for generating meta descriptions as by using the very same algorithm to generate meta descriptions (or other summative texts) we are ensuring that we put our best foot forward as we are playing by Google’s (or BERT’s) very own ranking rules.

Final Thoughts – Is BERT Worth Trying?

BERT is still one of the most recent developments by Google AI, and as of yet people around the globe are still experimenting on ways to apply this algorithm, both for academic purposes as well as to understand its potential in the SEO game. Although there are many algorithms available to play around with, BERT has been adapted by programmers the world over into online toolboxes, repositories and Python libraries making it easy to use by even the most novice of programmers. With the help of this fantastic AI natural language tool, anyone with coding knowledge can generate SEO optimized texts, including meta descriptions. By using BERT to generate summative texts, such as meta descriptions, at scale one is not only reducing costs and improving efficiency. In addition to this, but using BERT to generate text one is also ensuring that the algorithm by which they choose their meta descriptions is the very same one that understands user intent, therefore one is capitalizing on those unknown rules by which BERT ranks importance of text in passage indexing, user intent etc… If your aim is to land a spot as a featured snippet or one of the top 3 coveted links on SERP, then choosing whether to use BERT or not is a no-brainer!

Dr Michaela Spiteri BEng, MSc, PhD (AI / Healthcare domain), is a well-published researcher in the field of AI and machine-learning. She is the founder of AI consultancy Analitigo Ltd. Currently working as the lead researcher at Gainchanger.